Apple’s Review Process Was Built for a World That No Longer Exists

In the age of AI, building software has never been cheaper or faster. The competitive moat is shifting — away from who can build first, toward who can iterate fastest. Ship five experiments a day, kill the losers by evening, double down on winners by morning. That’s the new tempo.

But if you’re building for iOS, there’s a gate between you and that tempo: Apple’s App Store Review — the process every app and every update must pass through before it reaches a single user.

It’s not that review is broken. It’s that the cadence it was designed for — quarterly releases, polished packages, big-bang launches — belongs to a previous era. And if Apple doesn’t adapt it, the App Store risks becoming the place where iteration velocity goes to die.

What App Store Review Actually Looks Like Today

Start with what Apple claims, because the baseline matters.

Apple’s official target is that 90% of submissions are reviewed within 24 hours. For a traditional release cadence — shipping every week or two — that’s perfectly fine. But if your competitive loop demands multiple meaningful changes per day, even 24 hours is a batching constraint that forces you to think in chunks instead of thinking in experiments.

Apple already has some relief valves. Expedited review exists for critical bug fixes and time-sensitive events. There’s also a quiet but important carveout: bug-fix submissions for live apps won’t be delayed over guideline violations except for legal or safety issues. That’s Apple tacitly admitting that blocking production hotfixes for policy nitpicks is unreasonable.

Apple has also been reducing how often you actually need to go through the full review process. Things like promotional events, custom store listings, and marketing tests can be submitted without pushing a new version of your app. You can bundle multiple items and remove problematic ones without stalling the rest. These are meaningful improvements — but they don’t change the core dynamic for anything that touches how the app actually behaves.

And then there’s the EU. Under the Digital Markets Act, alternative app distribution now exists in Europe, but Apple still runs every app through a baseline security check called Notarization. Individual alternative marketplaces can layer their own policy review on top. Apple keeps the security gate but partially offloads “curation” to third parties. It’s a hint at how the architecture could evolve everywhere.

What the Velocity Tax Actually Costs

This isn’t hypothetical. Consider what a typical AI-powered app team looks like in 2026.

You have a consumer app — say a photo editing tool with AI features. Your model improves weekly. Your competitors on Android are running 30 A/B tests simultaneously, pushing interface changes hourly, and killing underperforming variants before lunch. You’re doing the same thing on the backend, but every time a test requires a change to the app itself — a new button placement, a tweaked onboarding flow, a feature that needs new client-side logic — you’re packaging a new version, submitting it to Apple, and waiting.

Sometimes it clears in four hours. Sometimes it takes two days. Sometimes it gets flagged for a metadata issue that has nothing to do with your actual change, and now you’re in a back-and-forth that burns a week. Your Android counterpart shipped the same experiment six days ago and already has statistically significant results. You’re still waiting for permission to start.

Multiply this across every AI-native team building on iOS, and you see the structural problem. The review queue doesn’t just slow individual releases — it changes how teams think. You stop designing experiments around “what would we learn fastest” and start designing them around “what can we batch into the next submission.” The iteration loop quietly deforms around the constraint, and most teams don’t even notice how much velocity they’ve lost because they’ve never operated without the bottleneck.

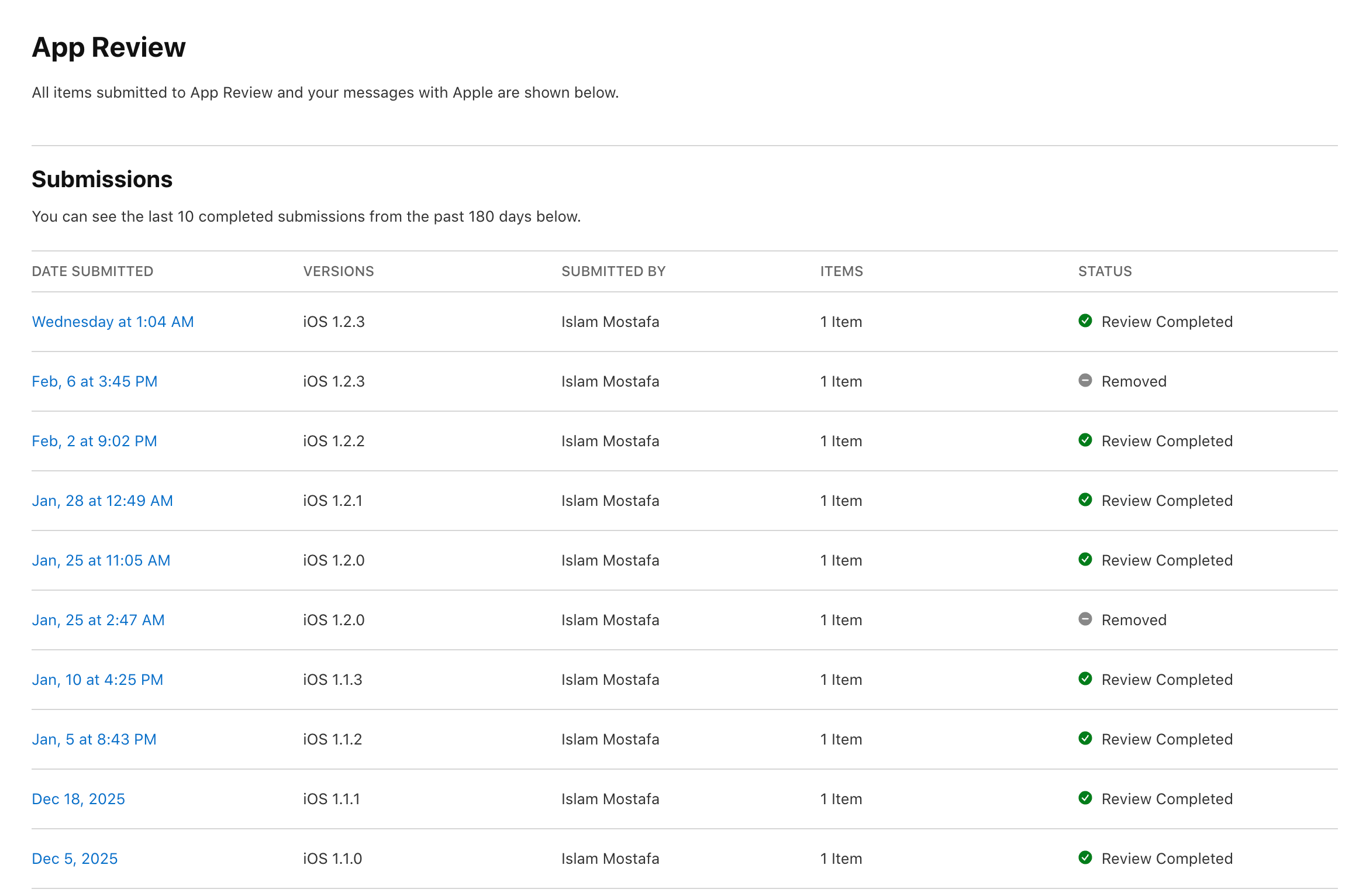

I know this firsthand. Below is my own App Review history for an AI-powered stickers app I’m building — ten submissions over ten weeks, roughly one per week, which is considered fast for iOS.

Two of those ten were “Removed” — pulled and resubmitted after issues. One cost me half a day. The other cost me nearly six. That’s six days where a single version sat frozen while competitors on the web or Android could have shipped the same change in minutes.

But the submissions that went through aren’t free either. Each one represents a batch — changes I accumulated until I had “enough” to justify a submission, rather than shipping the moment something was ready. The review process doesn’t just add latency to individual releases. It changes the shape of how you work. You start thinking in weekly bundles instead of continuous experiments. And at a weekly cadence, you’re already moving faster than most iOS developers. Imagine what you’re leaving on the table.

This isn’t a horror story. There’s no month-long rejection saga here. It’s the quiet, ordinary tax: a week here, a batch there, a rhythm shaped by the gate instead of by the product.

The Contrarian Case: AI Makes the Gate More Necessary

Here’s where the “just remove review” crowd loses the plot: AI doesn’t only make building cheap. It makes abuse cheap too.

Copycats scale faster. Scam apps scale faster. Dark patterns scale faster. AI-generated slop can flood a marketplace overnight. Apple’s core value proposition for the App Store has always been trust — a curated, safe environment where users don’t have to think twice before installing something. In an AI era, the threat to that trust intensifies, which means Apple may rationally become more cautious about review, not less.

So the winning move isn’t “remove review.” It’s make review adaptive — fast where it can be, rigorous where it must be, and intelligent enough to tell the difference.

What Velocity-Optimized Review Could Look Like (and Why Apple Hasn’t Built It)

The conceptual answer is straightforward: route low-risk updates through fast automated lanes, apply heavy scrutiny only where it matters, reward trustworthy developers with speed, and shift some enforcement from “before launch” to “after launch.” Every modern software deployment system works this way. Apple’s review, somehow, still doesn’t.

The interesting question is why not. And the answer has less to do with technical feasibility than with incentives, organizational structure, and strategic ambiguity.

Fast lanes for low-risk updates are the most obvious improvement. A text change on your settings screen is not the same as a new payment flow. Everyone knows this. But implementing risk-based routing would require Apple to formally define what counts as low risk and what counts as high risk — which means publishing clear criteria, which means giving up the discretionary power that lets App Review function as both a quality gate and a policy enforcement tool. Apple has historically preferred ambiguity. Ambiguity lets them reject apps for reasons that serve strategic goals without having to defend those reasons against a rulebook. Fast lanes would require a rulebook.

Reviewing what changed, not the whole app mirrors how every modern deployment pipeline works. But Apple’s review infrastructure was built in an era when apps were small, submissions were infrequent, and reviewers evaluated complete experiences. Retrofitting change-level inspection is a genuine engineering challenge — you need to reliably detect what’s different, analyze whether the changes affect sensitive areas, and trust that the rest of the app hasn’t been subtly altered. It’s doable — Apple has world-class engineering — but it’s a large investment for a system that, from Apple’s perspective, isn’t broken. Review “works.” The people it frustrates are developers, and developers have nowhere else to go on iOS. Until that changes, the urgency is low.

Rewarding good actors with speed — letting developers with long track records and clean histories move through faster lanes — is the most natural fit for Apple’s worldview. It rewards compliance, punishes repeat offenders, and lets Apple maintain control. The obstacle is probably simpler than it looks: building a trust score means building a system that can be gamed, which means building a system that detects gaming, which means ongoing operational cost for a team that Apple treats as overhead, not product. App Review is a cost center. Cost centers don’t get ambitious new feature development. They get headcount freezes and efficiency mandates.

Post-release monitoring with rapid takedown is perhaps the biggest philosophical shift. It means accepting that some bad apps will briefly reach users — a trade Apple’s culture viscerally resists. The App Store’s identity is built on the promise that everything in it has been vetted. “Ship now, verify continuously” works for the web and for Android, but Apple would need to reconcile it with the brand promise that makes the App Store worth its 30% commission. This isn’t impossible — it’s basically how Apple already handles Mac software through Notarization — but it requires trusting detection systems more than prevention systems, and that’s a cultural transition, not a technical one.

Smarter automated screening is already happening, incrementally. Apple uses automated scanning for privacy compliance, malware, and certain policy violations. The gap is that automation currently assists human reviewers rather than replacing them for routine updates. Closing that gap requires confidence that the automated systems won’t miss something that becomes a headline. And the reputational math is brutal: a thousand apps cleared faster generates no press coverage, but one scam app that slipped through automated review generates a news cycle.

The most elegant path forward might be one Apple already has a blueprint for: extend the Notarization model to iOS app updates. Automated integrity and security checks on every submission, always. Policy review targeted only at changes that touch sensitive areas. The EU’s alternative distribution framework already normalizes this exact architecture. There’s no technical reason the main App Store couldn’t adopt it — only institutional ones.

Regulatory Pressure Might Force the Timeline

Apple might not fix this on its own schedule, but regulators are starting to make the review process itself a target.

The UK’s Competition and Markets Authority recently secured commitments from Apple and Google around app store fairness and transparency — responding to developer complaints about inconsistent, opaque treatment. The DMA in Europe is applying pressure from a different angle: as alternative marketplaces mature, the App Store’s review process will be benchmarked against competitors who may offer lighter, faster alternatives.

Neither of these directly mandate “faster review.” But transparency requirements have a way of cascading. When you’re forced to publish clear rules, you lose the ability to hold apps for ambiguous reasons. When you’re forced to justify rejection timelines, you lose the ability to let submissions sit in limbo. The velocity tax shrinks not because regulators demand speed, but because they demand the accountability that makes arbitrary slowness harder to sustain.

What You Can Do Today (Even If Apple Changes Nothing)

If iteration velocity is your moat, don’t wait for Apple to redesign review. Treat it as a deployment constraint and engineer around it.

Feature flags and remote configuration are your most powerful tool. Ship the broadest set of features and flows your app might need in a single approved version, and toggle experiments on and off from your server. Stay within Apple’s expectation that your app’s core functionality doesn’t secretly morph without review, but use the flexibility you have aggressively.

Server-driven content lets you iterate on anything that qualifies as content rather than app behavior. If your experiments are about what users see rather than what the app does, you can move fast without touching the approved version.

Batch your app submissions, stream your experiments. Submit less frequently to the App Store; run more experiments between submissions. Each approved version becomes a platform for dozens of server-side iterations.

Use expedited review strategically for genuine production incidents. Don’t burn goodwill on non-emergencies, but don’t let a critical bug linger behind a 24-hour queue either.

Plan your release mechanics. Submit early. Set manual release after approval so you control timing. Avoid holiday periods when Apple has explicitly warned that reviews slow down. Treat the review timeline as an input to your sprint planning, not an afterthought.

None of this fully solves the problem. You’re still working around a constraint that shouldn’t exist for low-risk changes. But the teams that treat App Review as a known engineering constraint — like latency or memory limits — rather than an unpredictable act of God are the ones that ship fastest on iOS today.

The Bottom Line

If iteration speed is the moat — and in an AI-native world, it increasingly is — then App Store Review is one of the biggest velocity taxes on the iOS platform.

But the AI era also amplifies the need for trust gates. Cheap building means cheap abuse. The answer isn’t to tear down Apple’s review process. It’s to reshape it.

The tools exist: risk-based routing, change-level inspection, trust scoring, smarter automation, post-release enforcement. The reason Apple hasn’t built them isn’t that they’re technically impossible. It’s that App Review is a cost center defending a brand promise in an organization that benefits from the ambiguity of the current system. That calculus holds as long as developers have no alternative on iOS and regulators aren’t watching closely.

Both of those conditions are changing. The question is whether Apple adapts before the velocity tax drives the best builders to platforms that don’t charge it — or whether, as usual, it waits until the pressure is undeniable and then acts like it was their idea all along.